Training ReadyNow

Since ReadyNow learns from previous executions and improves warm-up and start-up time incrementally at every new invocation, you need to train ReadyNow to improve your application performance. ReadyNow gathers data from training runs and stores it in a profile log which ensures better performance after the first and subsequent runs.

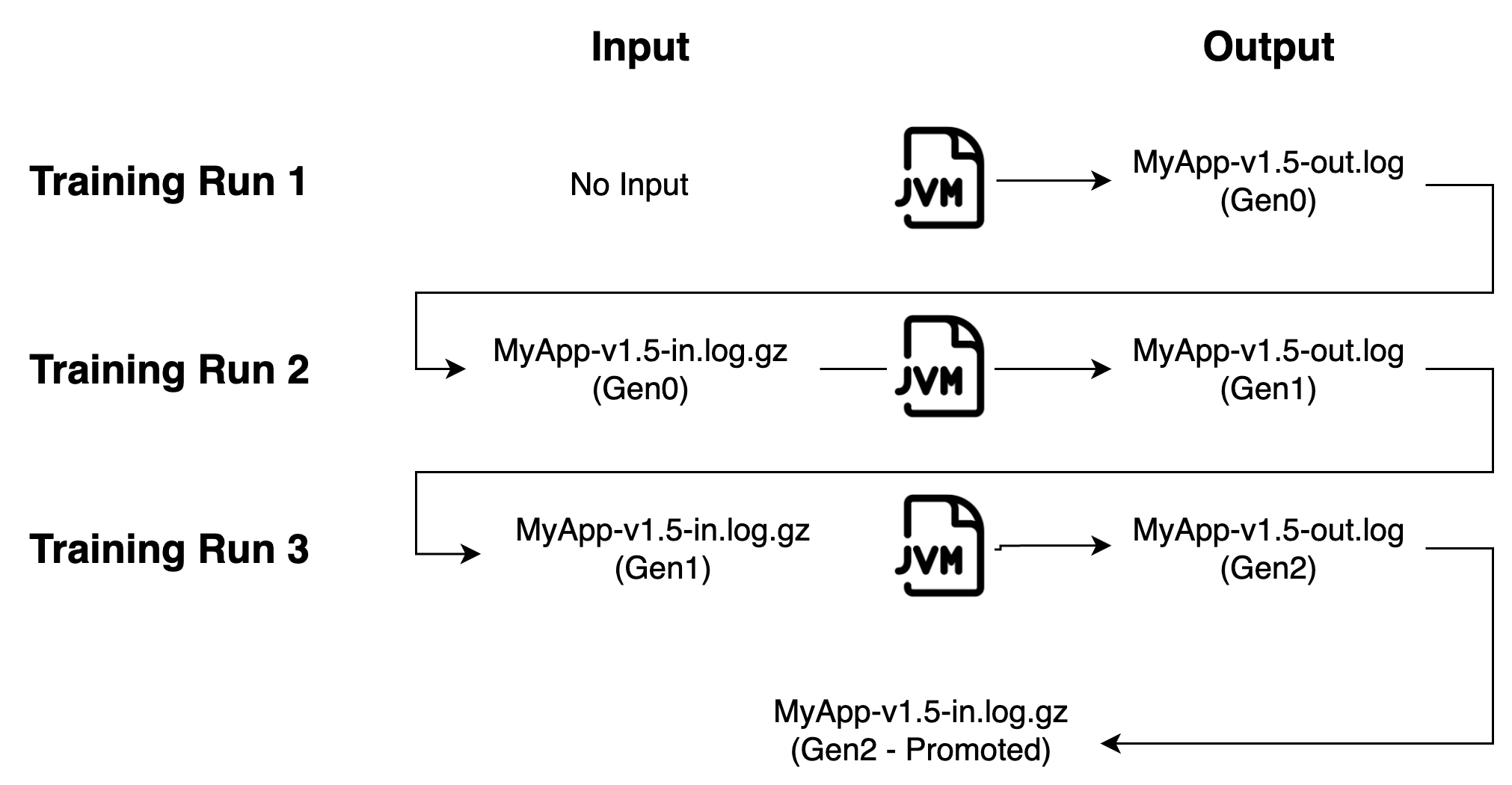

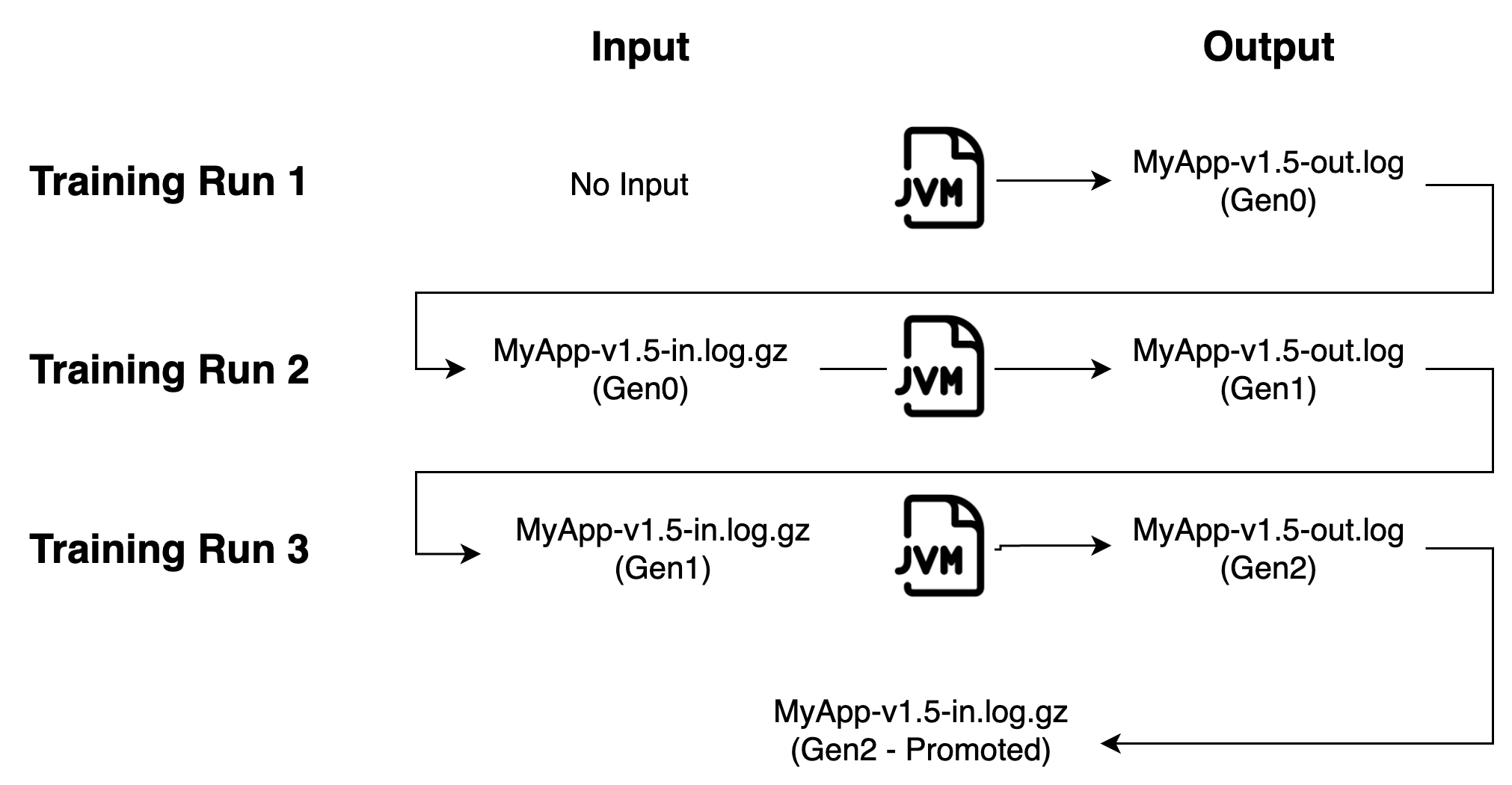

Understanding ReadyNow Generations

When using ReadyNow, you get best results if you perform several training runs of your application to generate an optimal profile. For example, to generate a good profile for an application instance called MyApp-v1.5, you perform three training runs of your application to record three generations of the ReadyNow profile log. For each training run, you read in the generation recorded by the last training run as the input profile for the current training run.

You want to make sure that the output of each training run meets minimum criteria to be promoted as the input for the next level. These promotion criteria can be:

-

The duration (time) of the training run

-

The size of the candidate profile log

When using the ReadyNow Orchestrator feature of Optimizer Hub, the creation of a promoted profile is handled automatically.

Possible Approaches

Depending on your goals and constraints, different approaches to train ReadyNow profile can apply.

|

Note

|

To reach an optimal profile, minimum 10,000 executions of all important application methods are needed in each run. |

Basic Approach (No Generations)

If time for training ReadyNow is limited, perform one run of your application in the pre-production environment with the following command-line options:

# Pre-Production test run

-XX:ProfileLogOut=profile.log

# Production

-XX:ProfileLogIn=profile.log

This captures a good profile, but may feature possible odd outliers in production when the code needs to execute methods in a different way than was part of the pre-production load.

Recommended Approach with Three Generations

To produce a profile that minimizes or eliminates learning in production, follow this approach.

Train the profile across three separate runs in the pre-production environment with the following command-line options:

# Pre-Production first run

-XX:ProfileLogOut=profile-generation-0.log

# Pre-Production second run

-XX:ProfileLogIn=profile-generation-0.log -XX:ProfileLogOut=profile-generation-1.log

# Pre-Production third run

-XX:ProfileLogIn=profile-generation-1.log -XX:ProfileLogOut=profile-generation-2.log

# Rename profile-generation-2.log to profile.log

# Production

-XX:ProfileLogIn=profile.log

# Production when you want to have a profile for diagnostics purposes

-XX:ProfileLogIn=profile.log -XX:ProfileLogOut=profile_for_diagnostics.log

That is, perform a run of the application until it reaches optimal performance, restart the JVM using the profile just generated, and again run the application until it reaches optimal performance. Repeat this, to create a third generation profile. The resulting profile log (profile.log) is the one to be used in your production system.

Rolling Profiles (Continued Generations)

If you cannot create a profile in a pre-production environment, you can configure your application to learn in production by using the same file as input and output. This results in a poor warm-up for the first run in production but gets better performance on later runs as it reuses the same file as input.

Use the following command-line options for rolling profiles:

# Production

-XX:ProfileLogIn=profile.log -XX:ProfileLogOut=profile.log

Use Rolling Profiles With Care

|

Note

|

The best practice is to use rolling profiles only for training. When a fully trained profile is available, you need to change the configuration to use a fixed file as ProfileLogIn. The following section describes the potential danger of using rolling profiles for the production run.

|

When using ReadyNow, the JVM reads the whole ProfileLogIn file at the startup and as soon as compilations start happening, it starts to write to ProfileLogOut file from scratch. This means that it starts recording the compiler behavior into an empty file. In some cases, for example when the JVM crashes shortly after start, this results in an almost empty output file as not that many (if any) compilations happened before the crash.

In case of using rolling profile, when ProfileLogIn equals ProfileLogOut, this results in overwriting the ReadyNow input, effectively deleting it. As this is the intended behavior of the rolling profile, no error is thrown and this situation might actually go unnoticed. When the JVM is restarted again, it starts basically with an empty profile, meaning there is no ReadyNow profile available to help the JIT compiler to warm up, which might result in latency spikes in production, causing unacceptable behavior.

Example Use Cases for Rolling Profiles

Some examples when a rolling profile can be used:

-

The JVM gets restarted every night, and the traffic patterns are different between days. For example, the application is used differently on workdays compared to weekends.

-

The JVM gets restarted or auto-scales, but the traffic is not high enough, so that not all the important methods reach the compilation thresholds.

In such cases, using a rolling profile is a valid use, but only until a fully trained profile is available. For example, when the application has been running for a full week (example 1), or the application has handled a full load (example 2). At that moment, you must reconfigure the application to use the final profile as ProfileLogIn. If needed, you can have a different ProfileLogOut for diagnostic purposes:

# Production once a fully trained profile is available

-XX:ProfileLogIn=profile.log

# Production when you want to have a profile for diagnostics purposes

-XX:ProfileLogIn=profile.log -XX:ProfileLogOut=profile_for_diagnostics.log

Understanding if Training Was Enough

To validate the sufficiency of ReadyNow training, check for outliers. The absence of outliers denotes the training went well. Reduced start-up latency also proves there is enough training data gathered in a profile log. Otherwise, a few more runs are needed to enhance the profile content and get a fully optimized profile log.